Artificial intelligence promises efficiency, insight and competitive advantage - but it also brings with it a growing environmental footprint and familiar challenges for data protection and governance. Deputy Data Protection Commissioner Rachel Masterton explores how organisations can move away from a ’bigger is better ’mindset and towards more thoughtful, sustainable approaches to AI.

Whatever your thoughts are about AI and its place in our world, three things are certain.

- It brings enormous potential to improve our world and is not going away

- It brings up new and novel challenges for data protection and privacy

- In its current form it is incredibly resource intensive

A 2019 study by the University of Massachusetts Amherst found that the carbon footprint of training the most resource-intensive AI model they tested was roughly equivalent to the life cycle of five cars. Worryingly, this figure included not only the petrol or diesel consumed by each car; but the building of the vehicles in the first place. More worrying is the cars in question were American cars, not renowned for their fuel efficiency. Training that system is the equivalent of nine European cars and almost eleven Japanese ones.

So the use of AI is not only an IT and/or data protection challenge but an ESG (Environmental, Social, and Governance) issue too. But what does that mean and how can you meet your business needs with due consideration of data protection and sustainability. Read on!

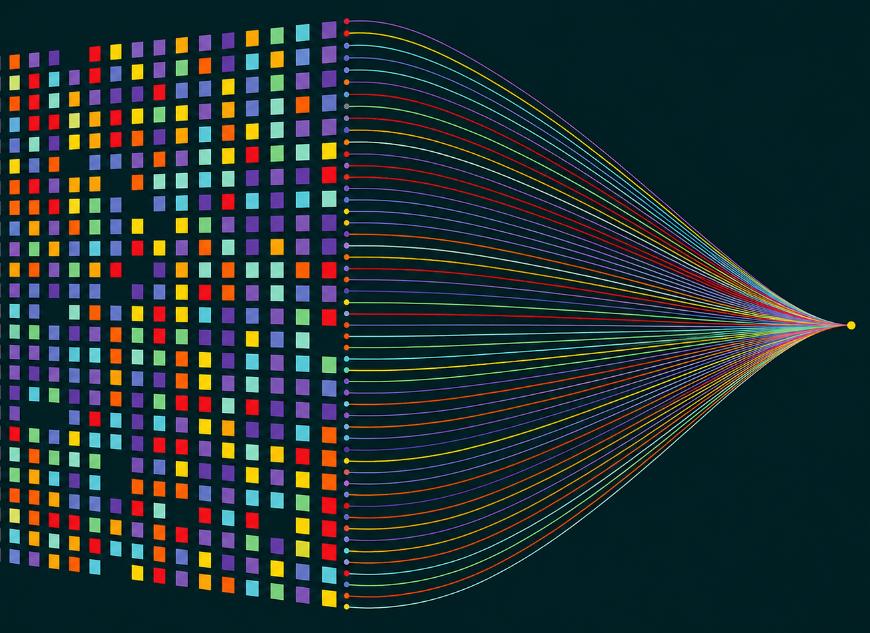

It took 75 years for the humble telephone to rack up 100 million users. It took ChatGPT two months. AI, especially generative AI, has had such an impact because it is big. Literally, it has the word ‘large’ in one of its key components (Large Language Models or LLMs*). But this brings with it the intensive resource requirements mentioned above at a time when businesses are being encouraged to think sustainably and be environmentally conscious. So I introduce a relatively new concept (well, new to me at least), Small Language Models or SMLs.

SLMs are efficient, often task-focused models, created using specific information for specific purposes. It is important to realise that whilst they may be small, we are still talking about 100 million to 10 billion parameters. Small by comparison to the hundreds of billions or trillions of parameters of their large siblings but arguably having a bigger impact on efficiency. By being designed with clear purposes in mind, they have specialised, savant-like knowledge rather than having to be everything to everyone.

Therefore, you could train and deploy an SLM within an organisation, feeding it only information you have approved and keeping it away from the expanse of the internet. A law firm, for example, could train an in-house model based on tried and tested sources; approved law, official judgments and academic level analysis - highly curated, synthetic (more later) or domain-specific datasets that provide less noise and more targeted responses.

By reducing the size, you are reducing the environmental burden that comes with LLMs. The World Economic Forum suggests that emissions from SLMs could be up to 90% less than standard LLMs. If trained well, on reliable sources, you are improving the output making it more effective and more efficient. And if you are limiting the access to the World Wide Web, you are also reducing the chances of data leaking from your solution; a real fear of many.

Now it is true that if you have a focused AI solution, it would be really good at some tasks and substantially less good at many others. Using our law firm example, it might be brilliant at finding reliable, accurate jurisprudence but terrible at advising why your sourdough loaves always have soggy bottoms. But then, if you were looking for in-person guidance on your baking, you probably wouldn’t ask your lawyer either. Perhaps complement your bespoke, reliable and frequently used SLM with carefully controlled access to an LLM for more general tasks.

Another way organisations are beginning to think more carefully about efficiency, both from a privacy and environmental perspective, is through the use of privacy preserving synthetic data. In simple terms, this is data that is artificially generated to reflect the patterns and characteristics of real-world information, without relating to any identifiable individual. Rather than copying or disguising existing records, models learn how the original data behaves and then generate new, synthetic versions that retain analytical value without carrying the same risks.

Done well, the privacy benefits are clear. Because synthetic data does not directly describe real people, it can allow organisations to test systems, analyse trends, develop software or train AI models while significantly reducing exposure under data protection law. It supports a more proportionate approach to data use, enabling insight and innovation without defaulting to the continued use of live personal data where it is not truly necessary.

There is a complimentary sustainability dimension. Synthetic data can reduce the need to repeatedly collect, store and process large volumes of real-world personal data, cutting down on storage demands and computational overhead. Once created, it can often be reused across multiple projects, avoiding duplicated datasets and inefficient retraining cycles. In this way, privacy preserving synthetic data reinforces the same principle that underpins smaller, more focused AI models: thoughtful design choices can deliver better outcomes for organisations, for individuals, and for the environment.

Rather than a jack of all trades, create and work with a master. ‘Right size’ your solution. Bigger is not always better, unless its baked goods.

*A term I struggle to get on board with as it has rather usurped the other uses of LLM (‘Master of Laws’ and ‘Licenced Lay Minister’).